People keep taking issue with this articles use of “summarizing” and linking to wikipedia… Summaries of copyrighted work are obviously not illegal.

This article is oversimplified and does a crummy job of explaining the problem. Ars Technica does a much better job explaining.

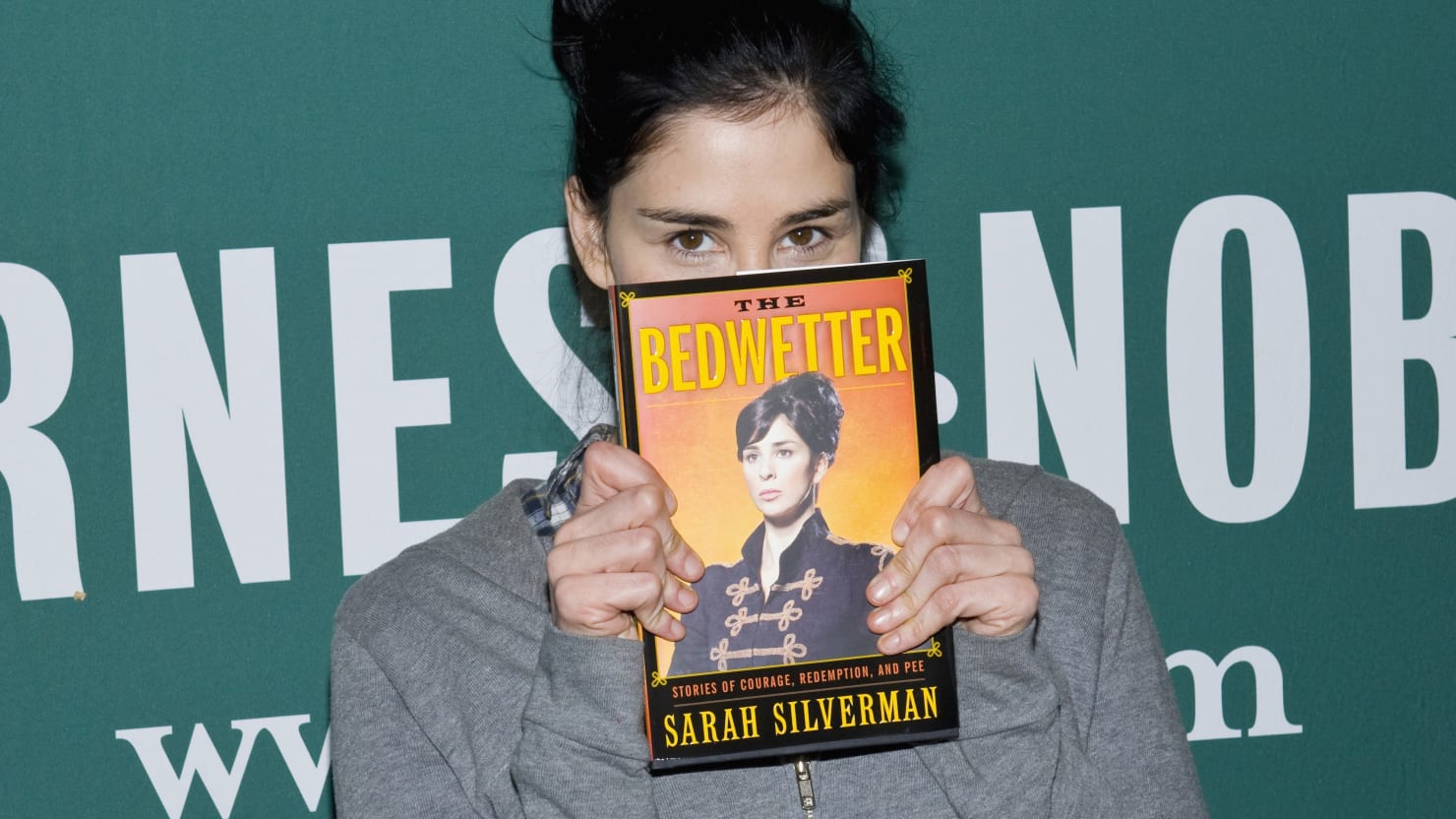

The fact that the ai can summarize these works in detail is proof that they were trained using copyrighted material without permission, (which is not fair use) Sarah Silverman is obviously not going to be hurt financially by this, but there are hundreds of thousands of authors who definitely will be affected. They have every right to sue.

Why does “fair use” even fall into it? I’m not familiar with their specific license, but the general definition of copyright is:

A copyright is a type of intellectual property that gives its owner the exclusive right to copy, distribute, adapt, display, and perform a creative work, usually for a limited time.

Nothing was copied, or distributed (in a form that anybody can consider “The Work”), or displayed, or performed. The only possible legal argument they have is adapting as a derivative work. And anybody who is familiar with how an LLM works knows that the form that results from reading in content is completely different from the source.

LLMs/LDMs are not taking in billions of books and putting them into a database. It is a very lossy process. Out of all of the billions of images trained from the Stable Diffusion database, the resulting model is 4 GBs. There is no universe where you can store billions of images into a mere 4 GBs. Stable Diffusion cannot and will not, pixel-by-pixel, reproduce a Van Gogh. It can make something that kind of looks like a Van Gogh, but styles are not copyrightable.

The same applies to an LLM like ChatGPT. It cannot reproduce entire books, or anywhere close to that. If you ask it to recreate Page 25 of Silverman’s book, it can’t do it. If it doesn’t even contain a minor portion of the original material, it can’t even be considered a derivative work.

They don’t have a case. They have a lot of publicity and noise, but they will lose to inevitability.

You make a lot of excellent points, but I think the main issue of contention is just using copyrighted work to train generative AI without the author’s permission regardless.

If they did ask permission, there would be no problem. But an author or artist should be given the choice if their work is going to be used to train an AI.

You make a lot of excellent points, but I think the main issue of contention is just using copyrighted work to train generative AI without the author’s permission regardless.

If I read a book at the library… and come up with an amazing revolutionary product. Then make a company and go on to make billions of dollar per year. The original book Author has no claim to my income.

There’s no contention. This is just a money grab. Copyright doesn’t disallow people from consuming the content as they please. It simply disallows someone to pass off the original works as your own when it’s not.

Well yeah, art is made to be consumed by people.

And all art is inspired by other art. People write scifi books after reading other scifi books etc Thats not the issue here.The issue is artists should be able to opt out of having their work taken and fed into a big project they have no control over.

The issue is artists should be able to opt out of having their work taken and fed into a big project they have no control over.

So in your opinion a should University have to ask each authors permission before using their work as a reference for each study run there one by one?

There is already a well established practise of getting permission in academic settings for reprinting written work/journal articles/etc. etc. And all published authors and academics understand that their work will be read, maybe used in an academic setting, summarized, debated, discussed, quoted, etc. Getting permission is definitely a thing in academia.

I’m actually surprised by the comments in here. This technology is incredibly disruptive to authors, if they are correct that their intellectual property has been misused by these companies to train LLMs, then they absolutely should have the right to prevent that.

You can both be pro AI and advancement, and still respect creators intellectual rights and the right to not have all content stolen by megacorporations and used by them to create profits while decimating entire industries.

I agree. This technology doesn’t exist in a vacuum. This isn’t some utopia where a Human artist can just solely focus on creating their art and not worry about financial gain because their survival needs are always guaranteed to be met or whatever.

Exactly this, this is the equivalent of me taking a movie, making a function, charge for it, and then be displeased when the creators demand an explanation about it.

It’s more like reading a book and then charging people to ask you questions about it.

AI training isn’t only for mega-corporations. We can already train our own open source models, so we should let people put up barriers that will keep out all but the ultra-wealthy.

It’s more like reading a book and then charging people to ask you questions about it.

No, it’s really nothing like reading at all. Your example requires a human element. This is just the consumption of data, not reading.

Humans are the ones making these models. It’s not entirely the same thing, but you should read this article by the EFF.

I don’t think that it is even remotely close to being the same thing. I’m sorry but we shouldn’t be affording companies the ability to profit off other people’s creations without their consent, regardless of how current copyright law works.

Acting as though a human writing a summary is the same thing as a vast network of computers processing data at a speed that is hundreds if not thousands times faster than a human is foolish. Perhaps it is also foolish to try and apply our current copyright laws (which already favour large corporations and not individual creators) to this slew of new technology, but just ignoring the fundamental difference between the two is no way of going about it. We need copyright reform, we need protections for creators, and we need to stop acting as though machine learning algorithms are remotely comparable to humans both in their capabilities, responsibilities and rights.

There is a perfectly reasonable way of doing this ethically, and that is using content that people have provided to the model of their own volition with their consent either volunteered or paid for, but not scraped from an epub, regardless of if you bought it or downloaded it from libgen.

There are already companies training machine learning models ethically in this manner, and if creators do not want their content used as training data, it should not be.

But when the answers aren’t original thoughts but regurgitations of other peoples’ thoughts about the book, then it’s plagiarism. LLMs can’t provide original output, only variations on what people have made available (whether legally or not). The answer might not even be correct or make any sense. It’s just predictive text to a crazy degree.

When you copy someone’s work without attribution, that’s plagiarism. When your output is only possible because of someone else’s work over which they own copyright and the output replicated the copyrighted material, that’s copyright infringement.

LLMs can provide original output, but they can also make errors. You’d have to prove it meets the grounds for plagiarism, and to my knowledge no one’s been able to. It’s all been claims with no substance or merit so far.

An LLM can’t make something original, it can only make something derivative. But that derivative work isn’t the same as when a human makes a derivative work because a human isn’t writing each word or phrase based on the likely “correct” next word or phrase through an algorithmic process. What humans do is magnitudes more complex, though it can at times also be accidental or intentional plagiarism.

In short, an LLM’s output is necessarily a string of preexisting human inputs. A human’s output, while it can be informed by and reference other human inputs, doesn’t have to replicate preexisting human inputs and can be an original analysis. The AI that is publicly available is not sophisticated enough to be more than fancy predictive text.

You’re making a hasty generalization here, namely by making sweeping claims without evidence or examples. Also, you’re begging the question by assuming that humans are more original than LLMs, again without providing any support or justification.

Take for example this study that found doctors prefered Med-paLM’s output to human doctors’. If Everything is a remix, there’s no reason LLMs can’t meet the minimum criteria for creativity, especially absent any evidence to the contrary.

You’re making a hasty generalization here

I’m really not, though I’ll readily admit I’m simplifying things. An LLM can only create something it’s been given. I guess it can generate a string of characters and assign a definition to it, but it’s not really intentional creation. There are many similarities between how a human generates something and how an LLM does, but to argue they’re the same radically oversimplifies how humans work. While we can program an LLM, we literally do not have the capability to replicate a human brain.

For example, can you tell me what emotions the LLM had when it produced the output it did? Did its physical condition have any effect? What about its past, not just what it has learned but how it was treated? What is its motivation? A human response to anything involving creativity factors in many things that we aren’t even consciously aware of, and these are things an LLM doesn’t have.

The study you’re citing is from Google, there’s likely some bias and selective reporting. That said, we were talking about creativity, not regurgitating facts or analyzing data. I think it’s universally accepted that as the tech gets better, it’s preferable to have a computer make the first attempt at a diagnosis, especially for a scan or large data analysis, then have a human confirm.

For the remix example, don’t forget that samples get attribution. Artists credit what they sampled and get called out when they don’t. I’m actually unclear as to whether an LLM actually can cite to how it derived its output just because the coders haven’t revealed if there’s some sort of derivation log.

No, it’s more like checking out every book from the library, and spending 450 years training at the speed of light, being evaluated on how well you can exactly reproduce the next part of any snippet taken from any book.

Eventually the bad actors are going to lose a lot of money trying to litigate their theft of people’s art. It was always going to end up in the legal system. These apps are even programmed to scrub watermarks and signatures. It’s deliberate theft.

One of the largest communities on Lemmy is [email protected], so I’m not really surprised that there’s people that don’t care about copyright :)

On the other hand, if a human is allowed to write a summary of a book, why should an AI not be allowed to do the same thing? Are they going to sue cliffnotes too?

My main point is that if people don’t want their content used for training LLMs they should absolutely have the option to not have their content used to train LLMs.

Training databases should be ethically sourced from opt in programs, that some companies are already doing, such as Adobe.

My main point is that if people don’t want their content used for training LLMs they should absolutely have the option to not have their content used to train LLMs.

How can one prove that their content is being used to train the LLM though, rather than something that’s derivative of their content like reviews of it?

there is already lots of evidence that they have scraped copyrighted art and photographs for their datasets.

if a human is allowed to write a summary of a book, why should an AI not be allowed to do the same thing?

Said human presumably would have to purchase or borrow a book in order to read it, which earns the author some percentage of the profits. If giant corps want to use the books to train their LLMs, it’s only fair that they’d have to negotiate with the publishers much like libraries do.

Said human presumably would have to purchase or lend a book in order to read it

Borrowing a book from a library doesn’t earn the author any more profits for each time it’s lended out, I don’t think. My local library just buys books off Amazon.

What if I read the CliffNotes and make my own summary based on that? What if I read someone else’s summary and reword it? I think that’s more like what ChatGPT is doing - I really don’t think it’s being fed entire copyrighted books as training data. There’s no actual proof LibGen or ZLib is being used to train it.

authors do get money from libraries that buy the books. and in some places they even get money depending on how much its checked out.

A lot of these comments are missing a large point which is that, if the claim is true, the books are being pirated and then effectively used for a commercial application.

So the authors are losing money through this process and did not give their permission for their work to be used in a commercial way.

The decision of this case will be wildly important for the development of AI.

if asked by a user prompts chatGPT to summarize a copyrighted book, it will do so.

So will a human. Let’s stop extending copyright law. Also, how you know it read the book, and not a summary of it, of which there are loads on the internet?

This is why I am pro AI art. It’s no different than a human taking inspiration from other work.

Nobody comes up with anything truly original. It’s all inspired by someone before them.

I don’t know how anyone is pro AI anything other than the pigs making money from it. Only bad can result of it. And will.

I don’t know how anyone can be anti AI.

It’s just a tool. To say that only bad can result of it is a bold claim that doesn’t make any sense.

Can you provide an example?

Just wait and see.

Seems very improbable that they scraped a pirate website with forced registration and tight daily download limits (10 books a day max?) to get content that’s often mislabeled and not presented in an homogeneous way.

Probably it’s just using the excerpt from Amazon (which instead with paid API access is much more easy to access) as a prompt and build on it

There’s been ongoing suspicions that pirated content was used to train popular LLMs simply because popular datasets used for training LLMs do include such content. The Washington Post did an article about it.

Google’s C4 dataset used for research included illegal websites. What remains to be seen is if it was cleaned up before training Bard as we know it today. OpenAI as revealed nothing on its dataset.

‘Reading my book infringes on my copyright.’ say confused writers.

This is a strawman.

You cannot act as though feeding LLMs data is remotely comparable to reading.

Why not?

Because reading is an inherently human activity.

An LLM consuming data from a training model is not.

LLMs forcing us to take a look at ourselves and see if we’re really that special.

I don’t think we are.

For now, we’re special.

LLMs are far more training data-intensive, hardware-intensive, and energy-intensive than a human brain. They’re still very much a brute-force method of getting computers to work with language.

This is what I never understood about the whole training on AI thing.

When a human creates an artwork, they don’t do it out of a vacuum. They’ve had a lifetime of inspiration from artwork they’ve discovered that inspires then to create something wholly new. AI does the same thing

AIs are trained for the equivalent of thousands of human lifetimes (if not more). There’s no precedent for anything like this.

The AIs we are talking about are large language models. They take human work as input and produce facsimiles. They are owned by individuals or companies that have no permission to exploit in this way intellectual property tied to other people’s livelihoods to copy them.

LLMs are not sentient, they don’t have inspiration, they are not creative and therefore do not create in the sense an artist would. They are an elaborate mathematical equation.

“Training” an AI has nothing to do with training an actual living being. It’s just tuning: adjusting an algorithm incrementally until the operator is satisfied with the result. I think it’s defendable to amount this form of extraction to plagiarism.

Dude, tell me, why do u think they have being doing this only with books and art but no music?

Thats because music really has people protecting their assets. U can have ur opinion about it, but that’s the only reason they haven’t ABUSED companies and people’s work in music.

It’s not reading, it’s the equivalent of me taking a movie, making a function, charge for it, and then be displeased when the creators demand an explanation.

There are a few reasons why music models haven’t exploded the way that large-language models and generative image models have. Maybe the strength of the copyright-holders is part of it, but I think that the technical issues are a bigger obstacle right now.

-

Generative models are extremely data-inefficient. The Internet is loaded with text and images, but there isn’t as much music.

-

Language and vision are the two problems that machine learning researchers have been obsessed with for decades. They built up “good” datasets for these problems and “good” benchmarks for models. They also did a lot of work on figuring out how to encode these types of data to make them easier for machine learning models. (I’m particularly thinking of all of the research done on word embeddings, which are still pivotal to large language models.)

Even still, there are fairly impressive models for generative music.

-

What is the meaning of “making a function” in your sentence?